Review of Machine Learning Algorithms for Diagnosing Mental Illness

Article information

Abstract

Objective

Enhanced technology in computer and internet has driven scale and quality of data to be improved in various areas including healthcare sectors. Machine Learning (ML) has played a pivotal role in efficiently analyzing those big data, but a general misunderstanding of ML algorithms still exists in applying them (e.g., ML techniques can settle a problem of small sample size, or deep learning is the ML algorithm). This paper reviewed the research of diagnosing mental illness using ML algorithm and suggests how ML techniques can be employed and worked in practice.

Methods

Researches about mental illness diagnostic using ML techniques were carefully reviewed. Five traditional ML algorithms-Support Vector Machines (SVM), Gradient Boosting Machine (GBM), Random Forest, Naïve Bayes, and K-Nearest Neighborhood (KNN)-frequently used for mental health area researches were systematically organized and summarized.

Results

Based on literature review, it turned out that Support Vector Machines (SVM), Gradient Boosting Machine (GBM), Random Forest, Naïve Bayes, and K-Nearest Neighborhood (KNN) were frequently employed in mental health area, but many researchers did not clarify the reason for using their ML algorithm though every ML algorithm has its own advantages. In addition, there were several studies to apply ML algorithms without fully understanding the data characteristics.

Conclusion

Researchers using ML algorithms should be aware of the properties of their ML algorithms and the limitation of the results they obtained under restricted data conditions. This paper provides useful information of the properties and limitation of each ML algorithm in the practice of mental health.

INTRODUCTION

The exponential expansion of data has emerged in every field from the rapid advancement of computer and internet technology such as a considerable number of students’ data from OECD in educational area and customer-related data from Walmart and Facebook in industrial side [1-7]. Such a huge growth of data also has occurred in the health domain as well. Various personal health information on patients and population started to be digitized in the electronic health record (EHR) system in many countries. In 2012, the EHR produced around 500 petabytes of data, and its size is expected to be 25,000 petabytes by 2020 [5,8]. Moreover, the growth is not restricted to its quantity. Developments of medical technology enable measuring various forms of human biology such as a gene, cerebral blood flow, and EEG, at a relatively lower cost but with higher accuracy than before [9-11]. This big quality data has a high potential to advance healthcare sector by deepening our understanding of human disease mechanisms but requires a different approach from traditional one for their efficient analysis.

Machine Learning (ML) has been acknowledged as an appropriate method for the analysis of big data. ML, conceptually suggested by Allan Turing and coined by Arthur Samuel in 1950s [12,13], has been in widespread use in various field including medical area since 1990s, due to incessant efforts of ML researchers along with expeditious developments of computing power. ML techniques have merits in handling big data in that scalable ML algorithm to process large scale of data have been already studied and devised by ML researchers even before the importance of big data emerged [14]. In addition, some ML techniques enable their machine to learn properly from the data of numerous variables compared to a small number of cases [15,16]. Thus, ML can be regarded as ‘an essential part of big data analytics,’ [14,17] and has contributed to resolving issues in healthcare such as early diagnosis of disease, real time patient monitoring, patient centric care, and enhancement of treatment [18].

However, as only successes of ML such as AlphaGo beating a human Go champion has been highlighted in press [19], ML started to seem like a magical wand to some people outside of ML fields. In our recent mental health research, a clinician expected that ML algorithms could be a remedy for small sample size or gave a proper diagnosis without expertise’s diagnosis even for training. In addition, Deep Learning or Neural Networks algorithm, well-known as the main algorithm of Artificial Intelligence (AI), was thought of as all about ML. These sorts of confusion obstacles clinical researches using ML techniques which are usually conducted collaboratively by domain experts and ML researchers.

Despite their great advantages, it is obvious that ML techniques are ‘not a panacea that would automatically’ yield a solution of generalizability or higher accuracy without large quality dataset nor any human instruction for training [20,21]. There are huge numbers of ML algorithms used for the analysis of clinical data other than deep learning, and each of them has its own advantages. Therefore, it is meaningful to organize and offer the prior knowledge about the ML algorithms necessary for applied clinical research with empirical examples of how ML has actually been employed in the clinical field. This information can be a useful guideline to facilitate communication between clinical and ML researchers and help their collaborative research more efficient. Section 2 introduces the basic concept of ML and traditional ML algorithms of supervised learning for clinical research. In Section 3, we focus on several examples of mental health research using ML algorithm and investigate ML algorithms used for those researches and their performance. In Section 4, their implications are summarized, and the points to be noted using those algorithms are discussed.

TYPES OF MACHINE LEARNING TECHNIQUES

Primary purposes of ML techniques are to analyze data, to predict target features of data, or to derive meanings of the given data. Here, we introduce two main types of ML, supervised learning and unsupervised learning, in terms of given data and the purpose of analysis. The ML algorithms used in previous works for mental health data are mostly categorized into these two types. We also introduce representative algorithms of each type with examples.

Besides those two main types mentioned above, another main type of ML is reinforcement learning (RL). We do not cover RL in this paper because the mental health data have not been formulated into RL settings in general. The main purpose of RL is for agents to learn optimal behaviors about given environments through repetitive simulations of interacting with environments. This is not applicable to our case where given data is a set of attributes and the corresponding values.

SUPERVISED LEARNING

The supervised learning (SL) is the most commonly used way in ML-based diagnosis. In the supervised learning setting, given data should be labeled. In other words, all data instances should be represented with attributes and corresponding values. Attributes are a set of features, representing data instances. For example, personal characteristics such as height, body weights, eye color, etc. can be attributes to describe a person, or a data instance. A label is the value of a data instance’s specific target attribute that we want to predict from other attributes’ information. The main purpose of supervised ML model is to predict labels, or values, of unseen instances with their corresponding given attributes. The SL model can be viewed as a mapping from attributes to a label. A label of a data instance can be either a discrete value, or a class, or a representing number in a continuous space. If labels are classes, the ML task is a classification problem. On the other hand, if labels are numbers in a continuous space, the task is a regression problem.

An example of a SL setting is described: in case of classifying diseases of patients, each patient is a data instance, a patient’s measured conditions are attributes, and the patient’s disease is a class. The ML algorithm employs attributes to predict a disease class. Given the measured data of a number of patients, the ML model can be built and then can be used to pre-screening of patient’s disease before medical professionals’ diagnosis. To be specific, the SL algorithms require subjects with measured values for attributes and labels that represent their diagnosis. The real diagnosed labels supervise the ML model to be trained. Once a model completed its training, it can predict diagnosis labels of new patients with the measured attributes.

UNSUPERVISED LEARNING

The unsupervised learning requires no supervision, unlike the supervised learning that requires target labels of data instances for the model to predict. The main purpose of unsupervised learning is not to predict target attributes but to handle data without supervision. Some examples of unsupervised learning are figuring out similarities between data instances, discovering relationships of attributes, reshaping attributes to reduce dimensionality, etc. The unsupervised learning methods have not been frequently used in the clinical field since a majority of clinical research using ML aimed to develop a tool to diagnose diseases whose diagnostic criteria were already determined as golden standards. Based on the literature review in our study, it was hard to find a study directly using unsupervised learning method for the diagnostic purpose.

The unsupervised learning, however, can be utilized for the additional analysis along with applying supervised learning techniques. We can bring the example described in the previous section for supervised learning as an example of unsupervised learning. Although disease types are labels for supervised learning, the label information can be neglected, and the extra analysis can be conducted with the unsupervised learning. In case of grouping data instances with a smaller number of groups than the number of diseases, from given data, generated groups can be viewed as an indicator to check similarities of diseases. By contrast, in case of grouping with a greater number, even a disease can be subdivided for the further analysis.

SUPERVISED LEARNING EXAMPLES

In this paper, we focus on the SL setting as described in the example for the supervised learning above. The supervised learning fits to the purpose of diagnosis since diagnosis can be viewed as evaluation/prediction of patients with measured quantities. We do not cover details of all supervised learning algorithms due to the large number of existing SL methods. Instead, we briefly describe representative SL algorithms frequently used in many works: Support Vector Machines (SVM), Gradient Boosting Machine (GBM), Random Forest, Naïve Bayes, and K-Nearest Neighborhood (KNN).

The SVM is one of the most famous and utilized supervised learning methods [22]. The fundamental concept of SVM is based on the binary classification case. For the binary classification, we want to divide given data points into two classes. Given data points distributed on the feature space, SVM finds a margin that divides feature space most well. The linear space division is the simplest form to divide a space. However, generally, data points are not distributed to be well linearly-divided. To cover the complicated non-linear cases, the advanced kernel trick and regularizations are used. Also, SVM variants for the multi-class classification and regression exist. SVM is known as well-working in practice. In order to work well, careful selection of kernel and corresponding data pre-processing are required.

GBM is a boosting method, which is an ensemble technique that leverages a set of weak learners to create a strong learner to obtain better performance [23]. Generally, decision trees, which use a set of hierarchical conditions to divide given data by stages, are used as weak learners due to its simplicity. For example, a set of weak learners may not fit well to given data. We add another weak learner that works well on a part of data that the current set of weak learners do not work well. Then, the new set of weak learners including the newly added weak learner perform better on the given data. The boosting method determines the next newly added weak learners based on the data where the current set of weak learners do not perform well. This procedure can be seen as adding weak learners that work well on data points difficult to handle with the current weak learners. For the aggregation of weak learners, the weights for weak learners are determined when they are newly added to the set. In terms of training, the model requires longer time than other methods because it requires to distinguish which data points are hard to cover with current set of weak learners at every step before adding a new weak learner. The model usually works well in practice.

Random Forest is another ensemble technique using decision trees [24]. Unlike GBM which is a boosting method, Random Forest is a bagging method that handles weak classifiers in a different way. The basic procedure that the model uses weak learners and adding new weak learners is same. However, when adding a new weak classifier, the bagging method including Random Forest searches for the best feature among a random subset of data, instead of specific data that are difficult to the current set of weak classifiers. In other words, many randomly generated different decision trees are merged into one learner, and this is why it’s called Random Forest. Surprisingly, the combination of many randomly sampled data-based decision trees works well in practice because the randomness helps the model avoid to overfit on data. However, its prediction speed can be slow if it contains a large number of trees and the model performance depends on the model parameter that represents how deeply the model has random trees. This also affects the prediction time of the model.

Naïve Bayes is a naïve probabilistic model to find the value that achieves the maximum probability computed from a conditional probability chain [25]. This model is called ‘naïve’ because it assumes independency between all measured attributes. In real world, data attributes are not always perfectly independent. With this assumption, conditional probabilities of attributes can be easily computed as long as all values of attributes are given. In Naïve Bayes model, the model designer is required to decide which attributes to view as dependent attributes to other attributes when computing conditional probabilities. In practice, in some cases, the model works surprisingly well even with this independency assumption, but in other cases, it totally fails to work. The model solely depends on characteristics of data.

KNN is a type of instance-based learning, where no computations are required before actual classification or regression [26]. In other words, given data itself can be considered as ‘learnt’ model and then computations are conducted when actual predictions are needed. The basic idea is that a label of a data point can be predicted from same/similar labels of nearest neighbor data points. Therefore, in order to use this algorithm, the selection of parameter K and attribute-distance computation metric to compute which other data points are nearest neighbors are required. For attribute-distance computation, relationships among attributes should be carefully considered to achieve good performance. However, this algorithm is usually not best because of its simplicity to model real world settings. Also, the computation time rapidly increases as the number of data or the number of attributes increase. In the general sense that prediction time should be short and that training time does not matter as much as prediction time, this disadvantage may be critical.

Here, we introduced five supervised learning models. The summary of these models is shown in Table 1. Generally, the performance of SVM, GBM, and Random Forest are usually better than the performance of other simple models, Naïve Bayes and KNN [24,27-31]. But sometimes simple models better depend on the data. The model adaptation always depends on the data characteristics. In most real-world cases, it is hard to predict which model will work the best before actually applying data to models. In some cases, may only a small number of measured attributes contribute to solving given problems even though a large number of attributes were measured. In some other cases, a complex ML model may overfit to given data so that it does not work well for unseen data while simpler ML models work better as they avoid overfitting to training data. Therefore, one of the best ways to figure out which ML model works is to apply given data to all models. Then we can choose the best-working model and analyze the data reversely from the model performance. Otherwise, we need a thorough investigation of data before selecting which ML model to use.

DIAGNOSIS OF HEALTH DISEASES BY USING DIFFERENT MACHINE LEARNING ALGORITHMS

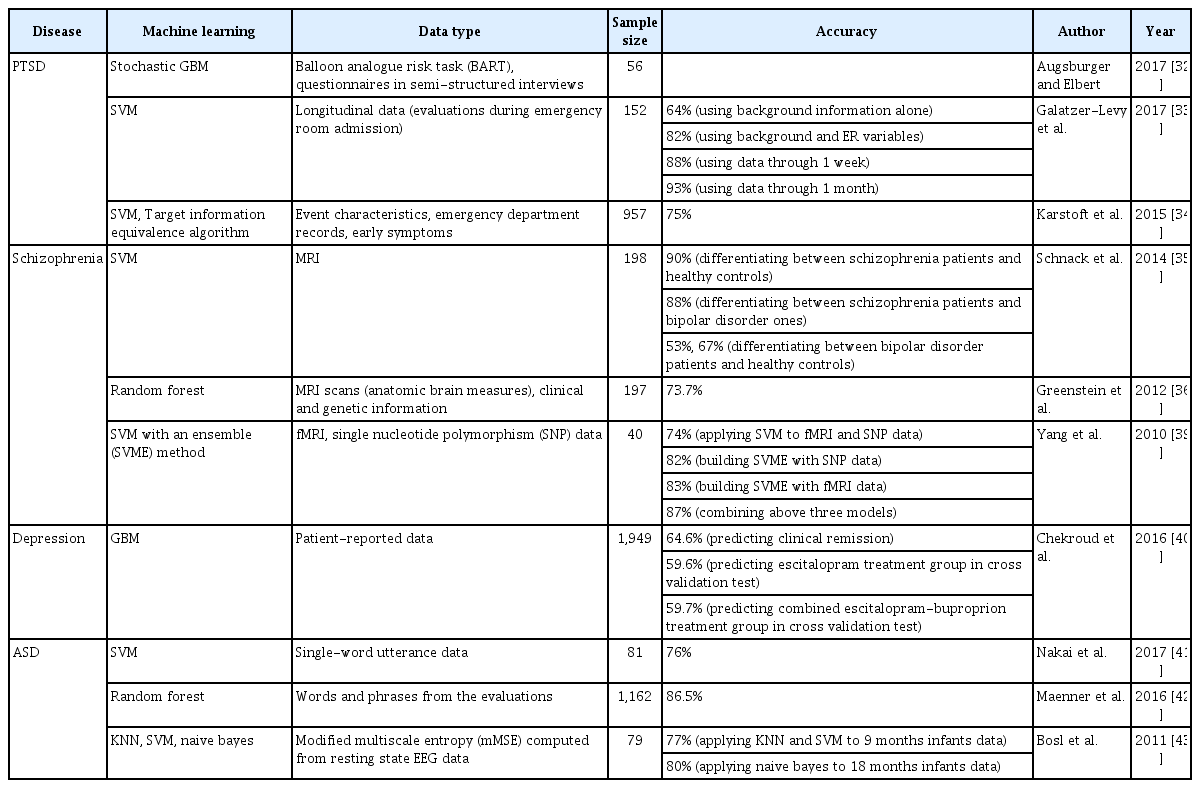

We searched research papers for diagnosis of mental illnesses using ML by article search engine. All literatures were selected in SCOUPUS, RISS, and PubMed. Keywords were mental disorders, mental illness, diagnosis, machine learning, and big data. We examined a total of 59 articles, of which 10 papers related to mental illness were analyzed and summarized. We categorized them according to the type of mental illnesses and summarized each of them by their purpose of using ML techniques, which techniques they use, data type, sample size, and the accuracy as their performances. Table 2 depicts the summary of each research.

Post-traumatic stress disorder

Augsburger and Elbert [32] performed a ML technique to predict risk-taking behavior for refugees that have experienced traumatic experiences. Data sets consisted of Balloon analogue risk task (BART) and questionnaires in interviews of 56 cases. ML technique used in this experiment was stochastic GBM and R was employed for analysis. This method was effective compared to conventional methods since lots of variables can be tested at the same time, even in relatively few samples. This experiment had the limitation that a small dataset is used to construct a model.

Galatzer-Levy et al. [33] predicted post-traumatic stress using Support Vector Machines (SVM). The data set was longitudinal data of 152 subjects collected during emergency room admission following the traumatic incident. Latent growth mixture modeling (LGMM) was used to identify PTSD symptom severity trajectories, and its result was used for outcome variable in models built by ML method. SVM-based recursive feature elimination was employed for feature selection, and SVM was used for predicting trajectory membership. MATLAB was applied by using SVM. Accuracy reported as the mean area under the receiver operator characteristic curve (AUC) was 0.64 only on the basis of back ground information, 0.82 on the basis of background and ER variables, 0.88 on the basis of data through 1 week, and 0.93 on the basis of data through 1 month.

Karstoft et al. [34] used a ML method for the purpose of prediction of PTSD. Data set comprised of 957 trauma survivors and 68 features about even characteristics, emergency department records and early symptoms. A Target Information Equivalence Algorithm was applied in this experiment; An optimal set of variables was selected from a large set of variables, and SVM was used for prediction by MATLAB. Area Under the ROC curve (AUC) was provided as accuracy, and mean AUC was 0.75.

Schizophrenia

Schnack et al. [35] employed a ML method to classify patients with schizophrenia, those with bipolar disorder and healthy controls by using magnetic resonance imaging (MRI) scans. MRI scans of 66 schizophrenia patients, 66 patients with bipolar disorder and 66 healthy subjects were used, and SVM was used to construct three models. The average accuracy of the model to discriminate between schizophrenia patients and healthy controls was 90%, and the average accuracy of the model separating patients with schizophrenia and bipolar disorder was 88%. The model to distinguish patients with bipolar disorder and healthy controls offered lower accuracy than other models, correctly classifying 53% of the patients with bipolar disorder and 67% of the healthy subjects.

Greenstein et al. [36] performed work to classify childhood onset schizophrenia (COS) groups and healthy controls.The employed data set consisted of 98 patients who suffer from COS and 99 controls. Data set comprised of 74 anatomic brain magnetic resonance imaging (MRI) sub regions and clinical and genetic information. Random Forest method is applied on the data set since it has lower error rates compared to other methods [37], and can determine the probability of diseases on the basis of the feature set of brain regions [38]. 73.7% accuracy was attained after classification.

Yang et al. [39] used a hybrid ML method to classify schizophrenia patients and healthy controls. The data used was gathered from 20 schizophrenia patients and 20 controls. SVM algorithm was implemented in this experiment using functional magnetic resonance imaging (fMRI) and single nucleotide polymorphism (SNP) data. The accuracy was 0.74 for the method that SNPs were used to build a SVM ensemble (SVME), and 0.82 for the method that voxels in the fMRI map were used to construct another SVME. Also, the accuracy of the method to attain a SVM classifier using components of fMRI activation obtained with independent component analysis was 0.83, and the accuracy of the way to combine above three models was 0.87.

Depression

Chekroud et al. [40] developed a ML algorithm to predict clinical remission from a 12-week course of citalopram. Data set consisted of 1949 patients with depression from level 1 of the Sequenced Treatment Alternatives to Relieve Depression. 25 variables were selected from 164 patient-reportable variables to make the most predictive outcome. GBM was implemented for prediction due to its merit that it combines a few weakly predictive models when built. By using GBM, 64.6% accuracy was attained. In cross validation test, the accuracy of 59.6% was obtained in the escitalopram treatment group (n=151) of Combining Medications to Enhance Depression Outcomes (COMED), and accuracy of 59.7% was offered in a combined escitalopram-buproprion treatment group (n=134) in COMED.

Autism spectrum disorders

Nakai et al. [41] performed a ML method to classify children with autism spectrum disorders (ASD) and typical development (TD). Data set was single-word utterance data and consisted of 81 cases, 30 with ASD and 51 with TD. The machine-learning-based voice analysis method was SVM and SVM was applied by using MATLAB. The accuracy offered by SVM was 0.76. According to the result of this project, the ML method was superior to speech therapist judgments if only single-word utterance was used for stimuli.

Maenner et al. [42] classified autism spectrum disorder (ASD) by ML algorithms. Random Forest was employed for feature selection and classification. Data set used in this project was words and phrases from the evaluations collected from the 2008 Georgia Autism and Developmental Disabilities Monitoring (ADDM) site, and consisted of 5,396 evaluations of 1,162 children. Data set about 9,811 evaluations of 1,450 children from the 2010 Georgia ADDM surveillance was employed for evaluating the classifier. Random Forest obtained 86.5% accuracy for prediction.

Bosl et al. [43] applied ML methods to classify infants into higher risk group at Autism Spectrum Disorder (ASD) or control. Data set was modified multiscale entropy (mMSE) computed based on resting state EEG data and consisted of 79 infants; 46 are at high risk for ASD and 33 are healthy controls. The k-nearest neighbors (k-NN), SVM, Naïve Bayes algorithms were used for classification to obtain the best classifiers for their data. The k-NN and SVM both provided 0.77 accuracy at age 9 months infants, and the result was statistically significant. Accuracy of 0.80 which was obtained at age 18 months was the highest among the statistically significant results Naïve Bayes offered.

DISCUSSION

The purpose of this paper is to provide information about basic concepts of ML algorithms frequently used in mental health area and their actual application examples in this domain. The analysis of mental health using ML techniques is mainly focused on the supervised learning setting for classifications. We reviewed PTSD, schizophrenia, depression, ASD, bipolar disease as domains where ML techniques were employed in mental health, and SVM, GBM, Random Forest, KNN, Naïve Bayes have been applied in these domains.

Among many ML techniques, SVM is commonly used technique in mental health. It has been employed for all domains in mental health, and most of the SVM classifiers built in the papers revealed more than 75% high accuracy, as expected. In the mental health domain, data are sparse, meaning measured feature values are discrete and features represent just a few characteristics of a data point. This data sparsity is one reason why SVM works well in this domain [27]. In some cases, different ML techniques such as GBM were utilized together for feature selection. The Ensemble methods of GBM and Random Forest were also employed as the main algorithm to classify some mental health patients for their merits that they can handle many features simultaneously without feature selection. They showed good performance as SVM on average, but the accuracy of one classifier was slightly less than 60% on the test sample. It implies ML algorithm itself may not guarantee classification in high accuracy. KNN and Naïve Bayes were used only once with SVM under our investigation, but their performance was equivalent or greater than to that of SVM in some cases.

However, the studies we have examined show several limitations. As can be seen from the above, some studies used a small size of instances, less than 100, to build their classifier through ML techniques. The accuracy of their classifier estimated on the samples may not be generalized since a small sample is not easy to represent the entire population. This may be unavoidable limitations due to finite resources in realworld clinical/diagnostic settings. Still, researchers in practice need to be aware of the fact that ML itself cannot resolve this issue.

Furthermore, many works did not describe even why they chose specific ML techniques. As reviewed above, each method has its own strength and weakness, and its performance depends on the purpose of studies and properties of data. Also, generally ML algorithms are affected a lot by human preprocessing such as hyperparameter tuning of models and data processing for models to be fitted into optimality. That’s why we cannot say that one specific algorithm is the best for all domains at all times. Researchers need to be circumspect about the choice of ML algorithm and to elucidate the ground for their choice with other procedures to be explained. In the case where researchers cannot be sure of which ML algorithm is the best for their research in advance, various classifiers need to be fitted to the data and selected by cross-validation. Researchers may analyze their data using several sorts of different classifiers such as SVM, RF and Naïve Bayes and choose the algorithm with the highest accuracy, as in Bosl et al. [43]

Acknowledgements

This work was supported by the National Research Foundation of Korea (NRF) grant funded by the Korea government (MSIT) (NRF-2017R1C1B1 008002).

Notes

The authors have no potential conflicts of interest to disclose.